PISA results give a snapshot of a nation’s performance, but teachers need more than that to improve student outcomes

Every three years, the Organisation for Economic Co-operation and Development (OECD) tests the reading, maths and science of 15-year-olds in what’s known as PISA (Programme for International Student Assessment). The tests are designed as a measure of global competence in the skills and knowledge of these three important areas, and the latest assessment, which took place in 2018, involved over 19,000 pupils across 616 schools in the UK and Ireland.

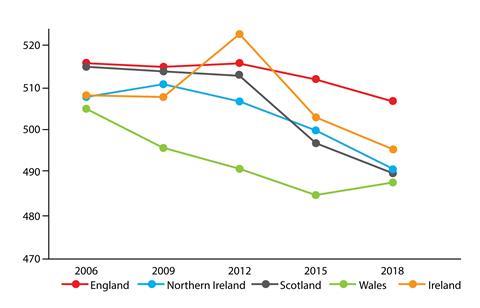

Ensuring the sample of pupils and schools chosen is representative at national level is the National Foundation for Education Research (NFER). The NFER also analyses the results, and chief social scientist Angela Donkin thinks the results look to be ‘on trend’. What is evident is a decline in science scores for all the UK home nations and Ireland since 2006. John Jerrim, a research associate at FFT Education Datalab, suggests the greatest concern is the sustained fall of performance in Scotland, Wales and Northern Ireland. The decline is especially pronounced in the scores for the highest achieving pupils.

However, these nations’ results are still significantly above the OECD average, because scores for science in OECD countries also fell. ‘I don’t have answers as to why. Perhaps everyone is struggling to find science teachers,’ suggests Angela.

What do the scores tell teachers?

‘To me it’s an indicator,’ says Philip Williamson, head of chemistry at Cookstown High School in Northern Ireland. ‘I wouldn’t put total faith in it – I’m far more invested in my school’s own benchmarking.’ Another chemistry teacher, Helen has an academic interest in the figures: ‘It’s useful just to get a feel for what’s going on with the national picture … but they don’t provide clues [as] to what’s going on in my school.’

Steve Nelmes, a regional programme manager at the RSC has viewed the PISA scores from several perspectives. As an A-level chemistry teacher, he says he had ‘little or no interest’ in them, although he frequently read that performance wasn’t good enough. As a head teacher, he paid much closer attention. But exchange programmes made him aware that colleagues across the world were also being told they weren’t doing well enough, while teachers from schools in China and South Korea came to learn what they could from UK teachers, even though they were outperforming the UK in PISA.

‘So what do you do as a teacher?’ he comments. ‘You choose what you believe to be best for those children in front of you and work with what you’ve been given.’

Helen agrees: ’We may worry about the results, but my biggest concern is my pupils and how are they going to do in their prelims and how [I can] support them. To go beyond that – I don’t have the time or the capability … It’s up to policymakers to investigate the figures, look at what’s going on elsewhere and give direction to teachers as to what strategies we could try.’

Education policy changes

‘One of the key things we look at is [whether] there is a problem and, [if so,] can we do something about it. [We also] look internationally to see if other countries have that problem,’ says Angela. PISA ‘is a useful tool to be able to look internationally.’ John points out that, with education a devolved power, ‘PISA remains the premier resource to measure the divergence of education systems and achievement’ across the UK’s home nations. He doesn’t think enough work is done to use PISA to inform educational research, policy and practice.

The test results for science find their context against the backdrop of policy changes in the UK and Ireland. In England, science coursework has been replaced with mandatory practical work to address engagement. The fact that more pupils are now taking STEM subjects might be an indicator of impact, suggests Angela.

Life satisfaction

The OECD asks other questions about pupil resilience and life satisfaction. John Jerrim points out that 15-year olds in the UK are much less likely to say that they are satisfied in life than almost every other country that participated in PISA 2018. Indeed, their satisfaction has fallen faster than any other country (with comparable data) over the last three years. And that highly correlates with a fear of failing.

‘What often gets missed is the timing of the assessment – we’re the last country to do it – just six months before GCSEs,’ he comments. So close to national exams can be a stressful time for students. What teachers may be interested to know is that the majority of all pupils who took the PISA tests say they put less effort in than they would do for national exams.

In Ireland, a new science curriculum for the junior years of secondary school was introduced in 2016, so few of the pupils sitting PISA tests in 2018 will have studied under the new system. In contrast, in Scotland most pupils will have come through its Curriculum for Excellence, introduced in 2010. For Alison Murphy, a former physics teacher and now full-time union official at the Educational Institute of Scotland (EIS), the decline is ‘disappointing’.

The results, she suggests, ‘match with things teachers have been saying – [that] the implementation was too rushed, and we haven’t got articulation between the different phases clearly enough. At least some of what we’re seeing is a consequence of [the reality that we can’t] develop well if we’re constantly scrabbling to catch up with the latest changes.’ Indeed, says Alison, teachers in Scotland complain that continual changes to the curriculum have meant a constant rewriting of materials, while a shortage of specialist teachers has resulted in multi-level classes, ‘which don’t work well in science.’

Wales has responded to earlier PISA scores: a new curriculum to be introduced in 2022 ‘is more thematically based, as in Scotland,’ explains Cerian Angharad, a former science teacher and co-founder of education consultancy See Science. The Welsh government wants science scores to reach 500 in the next round of tests in 2021. ‘After the 2015 results they said they wanted to improve the portion of top-performing learners,’ says Cerian. More resources are now being put into teaching. But Cerian adds, ‘It’s difficult to know how long things take to impact in science – and you’re only getting a snapshot.’

Geoff Barton, general secretary of the Association of School and College Leaders (ACSL) union, agrees: ‘PISA is just one measure of an education system and we should be careful not to overemphasise its significance.’ He points to the increased uptake of sciences at GCSE and A-level.

Online moves

In 2015, the previously pen and paper tests became computer-based. This allowed examiners to test students’ ability to apply scientific investigative skills in virtual experiments and evaluate outcomes – ultimately testing how useful science knowledge is, as opposed to the knowledge of scientific facts.

John Jerrim, a research associate at FFT Education Datalab argues that conducting the tests online makes it difficult to compare the latest two rounds with results obtained between 2000 and 2012.

1 Reader's comment