Put learner’s knowledge of kinetics to the test

The topics covered in this Starter for ten activity are: rate determining step, calculating reaction rate, measuring reaction rate in the lab, determining the rate equation, Arrhenius and rate.

Example questions

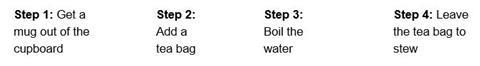

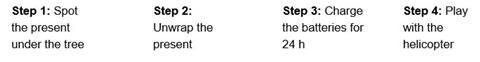

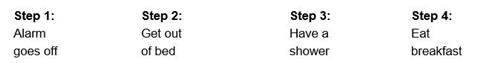

For each of the everyday processes described below, identify the step that slows the process down.

(a) Making a cup of tea

(b) Playing with a model helicopter received as a Christmas present

(c) Getting out of the house in the morning on time

The overall rate of these processes is controlled by the rate of the slowest step. For a chemical reaction we call this step the rate determining or rate limiting step.

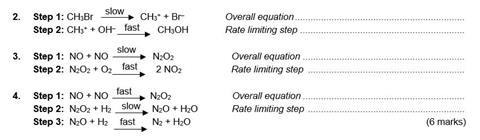

For each of the multi-step reactions below, write the overall equation for the reaction and identify the rate limiting step.

In a chemical reaction, any step that occurs after the rate determining step will not affect the rate. Therefore any species that are involved in the mechanism after the rate determining step do not appear in the rate expression.

Notes

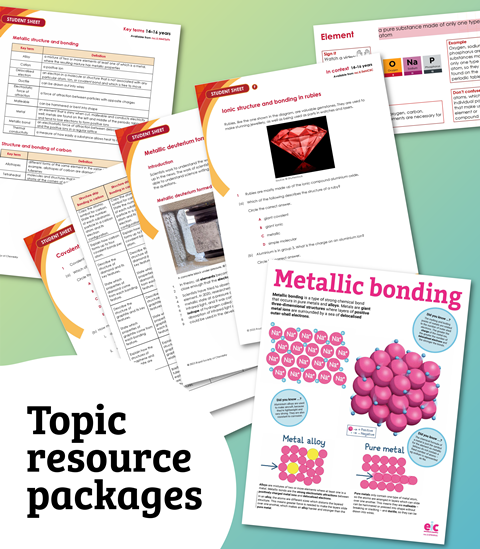

For a full version of this question and answer sheet, see the ‘Downloads’ section below. An editable version is also available.

Downloads

Kinetics - editable

Word, Size 0.49 mbKinetics

PDF, Size 0.48 mb

Starters for 10: Advanced level 2 (16–18)

This chapter in our Starters for ten series covers: kinetics, equilibria, acids and bases, carbonyl chemistry, aromatic chemistry, compounds with amine groups, polymers, structure determination, organic synthesis, thermodynamics, periodicity, redox equilibria, transition metal chemistry, and inorganics in aqueous solution.

No comments yet